Explainable AI - Concepts

There are many definitions of what explanations are in the context of explainable AI, such as:

Explanations are answers to questions about the model's predictions or operation.

How does it operate? Why does it makes certain prediction? What is the role of certain neuron or layer?

In contrast to explainable models, black-box models share no insight about their operation or predictions.

Multiple Dimensions

The questions and the answers depend on the audience, the context and other dimensions.

One paper on XAI for radiology found that doctors preferred text outputs + saliency maps to aid their decisions (in a sense, emulating doctor-to-doctor communication). The output was then evaluated by a clinician.

Similarly, in synthetic chemistry, new candidates are vetted by expert chemists. Again, text can be a useful piece of information for the expert to work on, consider, evaluate. However, with no "human in the loop", this approach isn't enough.

The list of explanation dimensions may be infinite. A few are:

- Intend, Purpose, Stakes: such as transparency, trust, ethical reasons. Healthcare, finance, energy, military, general agentic-systems and automated decision-making.

- Yet in other scenarios, explainability may not matter —but only the model's output, which a human expert could evaluate.

- User Type / Audiences: Each audience has goals, risks, and preferences. Scientists, ML practitioners, developers, non-experts.

- Stages: pre-modelling, modelling, post-modelling.

- Kind: Visual, Textual, Formal. More below.

Yet another dimension related to the explanation kind and its complexity is recalled in The perils and pitfalls of explainable AI: Strategies for explaining algorithmic decision-making:

Belle and Papantonis (2021) provide four suggestions for creating explainability, including explanation by simplification, describing the contribution of each feature to the decisions, explaining an instance instead of in general, and using graphical visualization methods for explanations. At the same time, they also discuss the complexity of realizing such suggestions. Simplifications might not be correct, features can be interrelated, local explanations can fail to provide the complete picture, and graphical visualization requires assumptions about data that might not necessarily be true.

Disentangling Dimensions

We can consider characteristics of explanations independent of the audience and context:

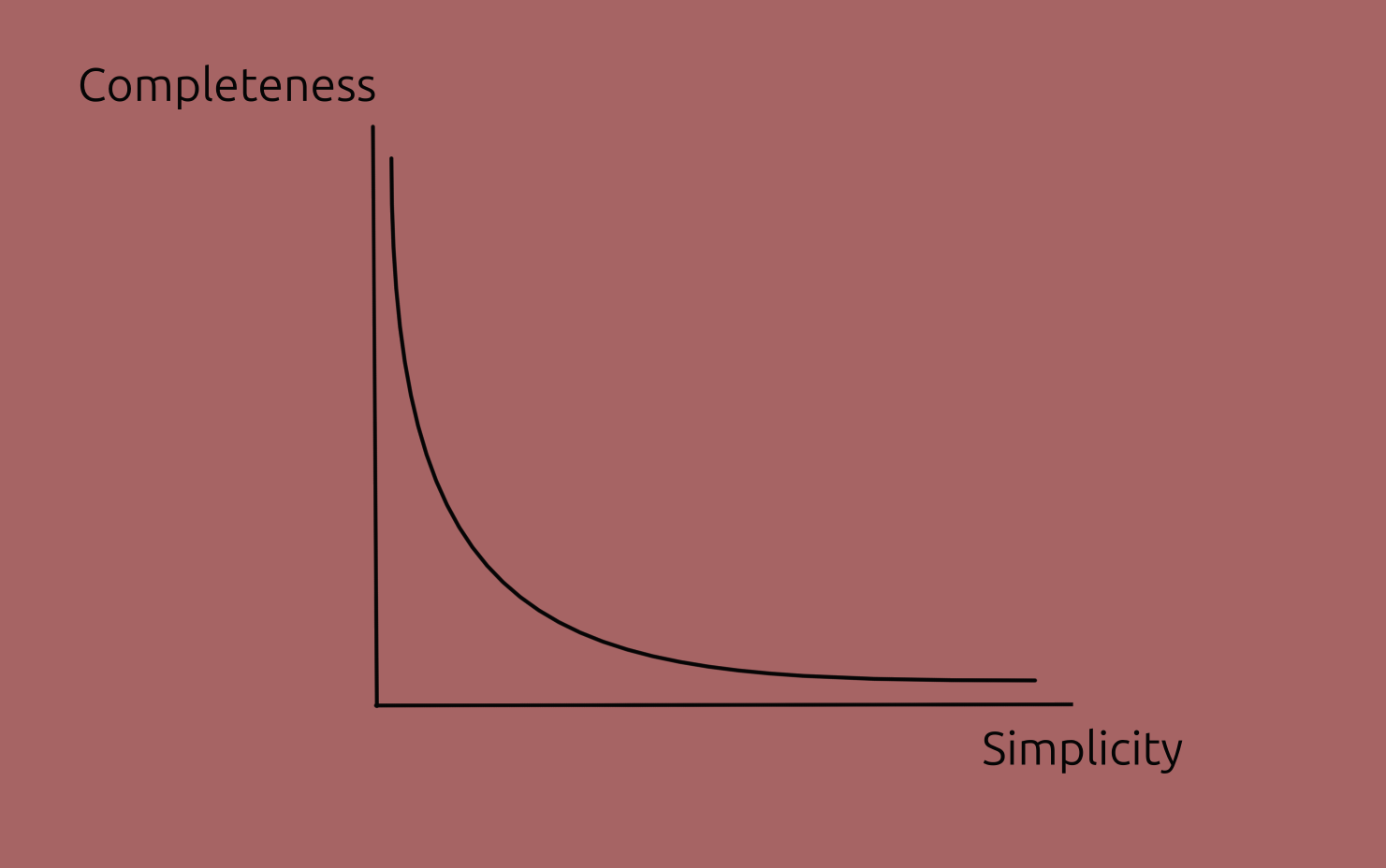

- Simplicity: how easy to understand the explanation is. (The opposite term, complexity, could be used as well.)

- This is correlated with how simple the model itself is.

- Completeness: how accurately it describes the model's behaviour.

- Level / Mereological: High level or lower level; coarse grained or detailed; selection of parts and functions.

- Internal or external

This trade-off isn't universal but just a common case, particularly in deep learning; some other models are straightforward, in which case both characteristics can be high.

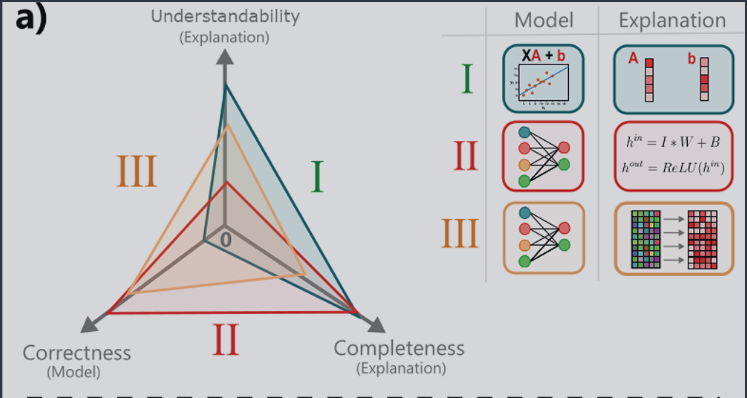

Predictive power

Predictive power is a characteristic of a model, not of an explanation of a model. Yet, it is correlated to the characteristics given earlier: more predictive models tend to be more complex making harder to explain them.

The reason to include it here is that predictive power plays an important role deciding which model to use.

In the image below, note that understandability replaces simplicity, and correctness replaces predictive power.

Image from paper under CC-BY-SA 4.0

Let's now look at some actual methods.

Sources:

- A Unified Approach to Interpreting Model Predictions (2017),

- Explaining Explanations: An Overview of Interpretability of Machine Learning (2018),

- [Producing radiologist-quality reports for interpretable artificial intelligence][radiology] (2018),

- The perils and pitfalls of explainable AI: Strategies for explaining algorithmic decision-making (2021) this paper is a particularly short and amenable introduction;

- Interpretable and Explainable Machine Learning for Materials Science and Chemistry (2022),

- Blog Posts: What is Explainable AI? (2022) and from IBM,

- A Perspective on Explainable Artificial Intelligence Methods: SHAP and LIME (2024).