Explainable AI

Having defined causal explanations we can define model explainability —the focus of Explainable Artificial Intelligence— as:

finding the causes underlying a model's predictions or operation.

But can a model be pragmatically considered explainable if it can not be communicated to the target audience?

It should also be noted that, while explanations are often framed causally, they may involve non-causal relations such as correlations, constraints, or contributions (LIME, SHAP). Especially in XAI.

We can amend the definition of model explainability to better fit the 3-legged definition of explanations given earlier:

the degree to which humans can effectively answer questions about a model's predictions or operation, either directly or using explainability methods.

Questions includes more than just why-questions, and also accepts associations and contributions; we won't necessarily get to a causal structure.

Effectively includes the social and communicational aspect of it (which Grice's Maxims aid).

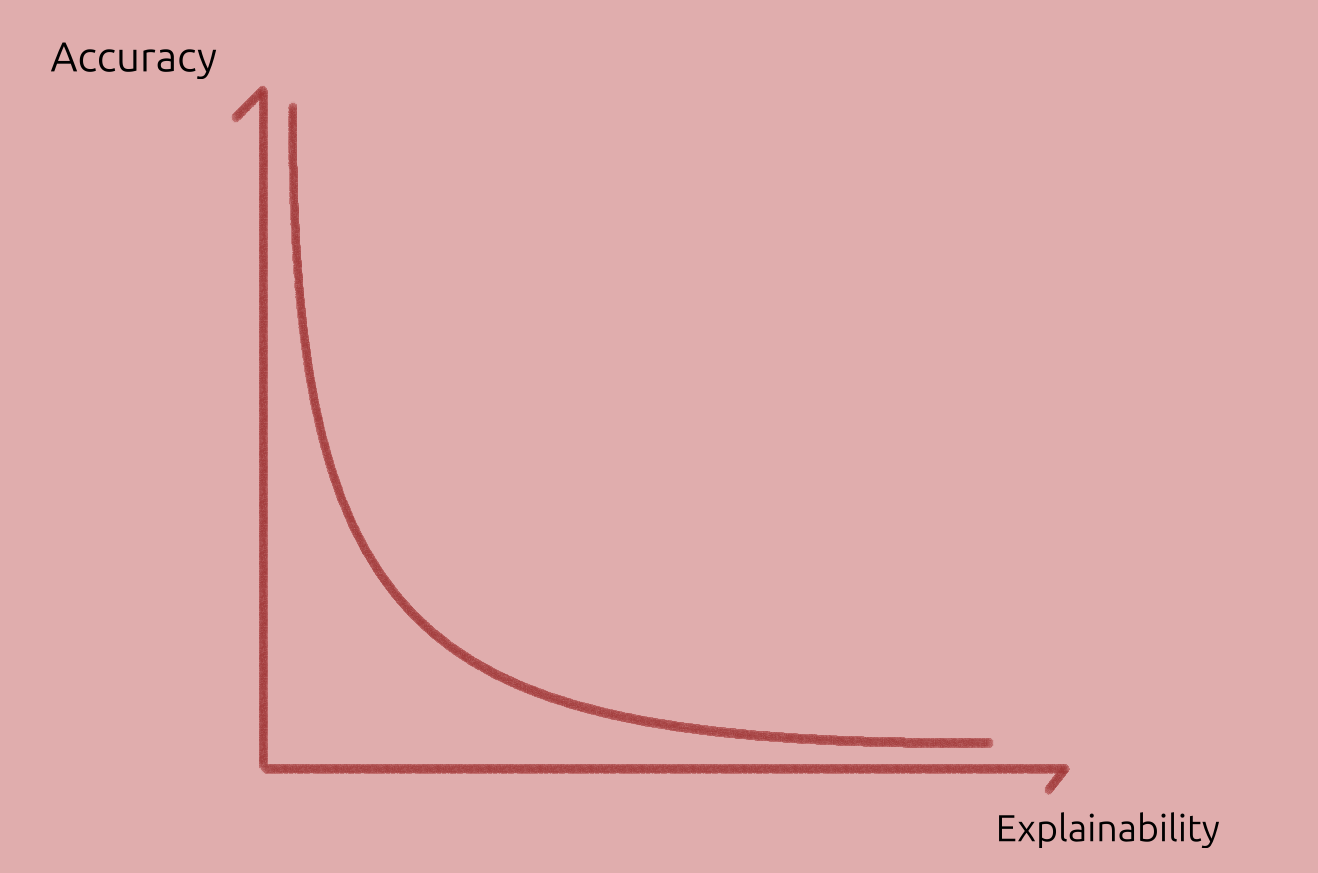

Trade-off

One trade-off is that each audience will demand certain guarantees, and have expectations, and expertise, but we do not want lose much fidelity to the original model.

Simplification loses fidelity. Care must be taken to make "things as simple as possible, but not simpler" or there is risk of oversimplifying. This is compounded by the fact that more complex and accurate models tend to be less explainable.

This is not universal, but we could represent this common case as:

Overview of methods

Within the cognitive process of explanations, model explainability benefits from methods to identify causes or relevant properties.

For all audiences, we can group these methods into more general categories, and then go into specific cases for a certain audience.

Kinds of Methods

The survey Principles and practise of explaining ML models includes a table of method kinds. A modified version of the table is below:

| Kind | Advantages | Disadvantages | Question |

|---|---|---|---|

| Local explanations | Explains the model's behaviour in a local area of interest. Operates on instance-level explanations. | Explanations do not generalize on a global scale. Small perturbations might result in very different explanations. | How do small perturbations affect the output / prediction? |

| Examples | Representative items for each class provide insights about the model's internal reasoning. | Examples require human selection. They do not explicitly state what parts of the example influence the model. | How do inputs from different classes compare? And same? |

| Feature relevance | They operate on an instance level (some can operate globally). | Methods may make assumptions which do not hold (e.g. feature independence, linearity). | Which input features are most important? |

| Simplification | Simple surrogate models explain opaque ones. | Surrogate models may not approximate original models well. | Can we get local insights by using a simpler model? |

| Visualizations | Easier to communicate to non-technical audiences. Most approaches are intuitive and not hard to implement. | There is an upper bound on how many features can be considered at once. Humans must inspect plots to derive explanations. | Class boundaries? |

We should remember that:

Relying on only one technique will only give us a partial picture of the whole story, possibly missing out important information. Hence, combining multiple approaches together provides for a more cautious way to explain a model. (...) At this point we would like to note that there is no established way of combining techniques (in a pipeline fashion),

In the next posts, we focus on methods that aid causal attribution (or cognitive process) with a scientific audience in mind.

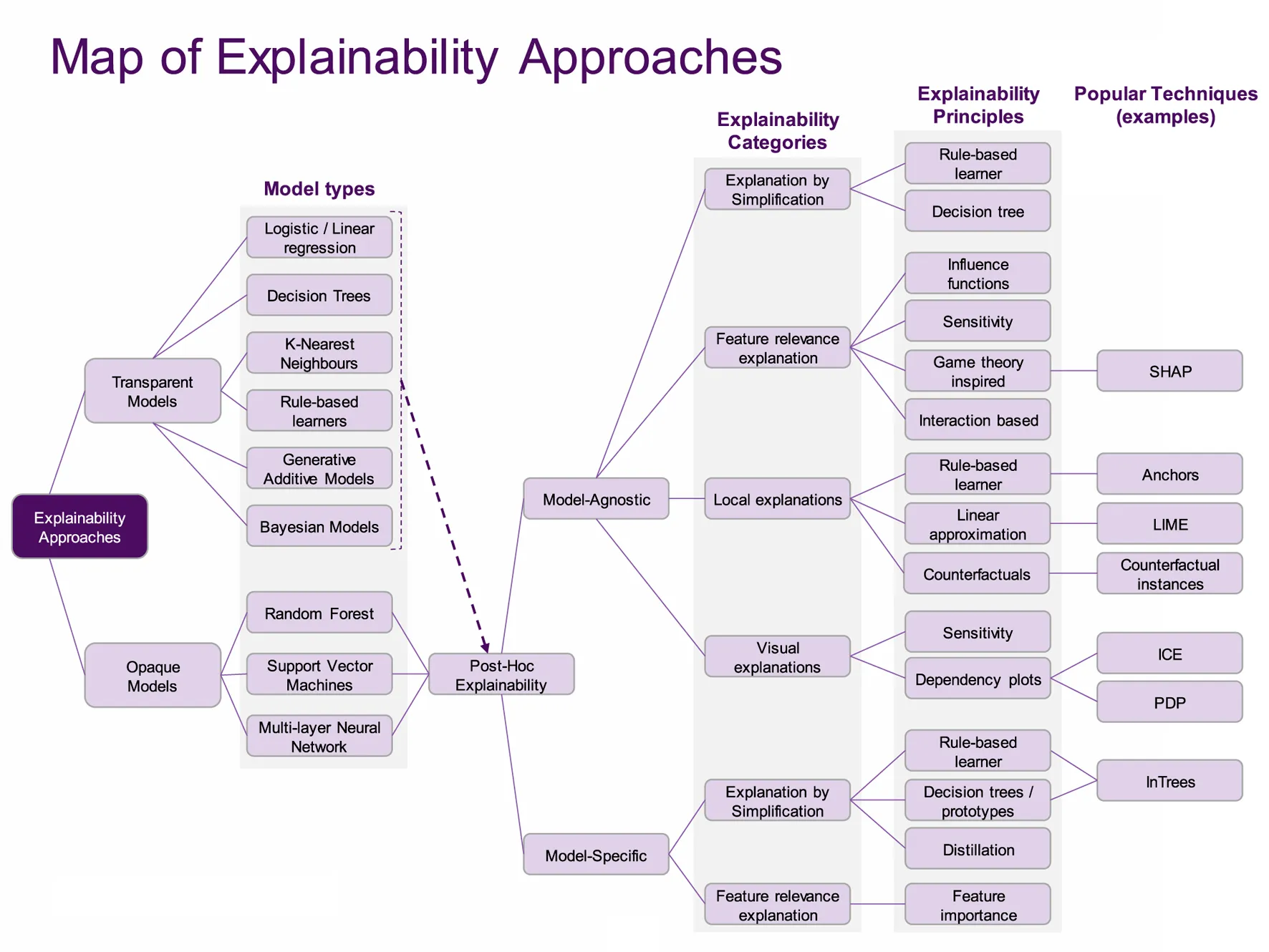

Map of XAI

An interesting map of XAI is given in the survey Principles and practice of explainable ML (2021).

Most classic ML models are in the dashed area under Model types column.

Classic ML models are usually transparent (intrinsically explainable) but may benefit from post-hoc (post training) explanations, such as visualising it. When transparency is key and the predictions are accurate enough, these may be preferred over DL models.

The focus here though, is explaining deep learning models. These are usually opaque ("black-box") models, and their accuracy is usually higher than classic ML models.

In other words, classical ML and DL models each have their use-cases.

List of sources used in this blogpost

- Principles and practice of explainable machine-learning (2021, 25 pages): Sections 8–11 are a useful review of explainability methods.